Imagine you’re learning to tie your shoes. You could approach it two ways.

The first way: You could try every possible combination of moves, billions of times, until eventually you stumble on one that works. Your brain would be working at full capacity the entire time, burning through energy like a space heater in winter. Eventually you’d figure it out, but you’d be exhausted.

The second way: Someone shows you the logic—“left over right, pull tight, make a loop”—and suddenly you get it. You understand why each step matters. You can apply that same logic to other things too. And you barely break a sweat doing it.

For the last five years, artificial intelligence has been doing the first thing. Using brute force. And it’s been working… sort of. But there’s a problem nobody really talks about: AI is becoming an electricity monster.

Training a single AI model now uses as much power as a small neighborhood. Running these models costs fortunes in electricity. And there’s no way the electrical grid can keep up if this trend continues. It’s like we’ve built incredibly smart robots that are also incredibly thirsty for power, and we’re running out of water.

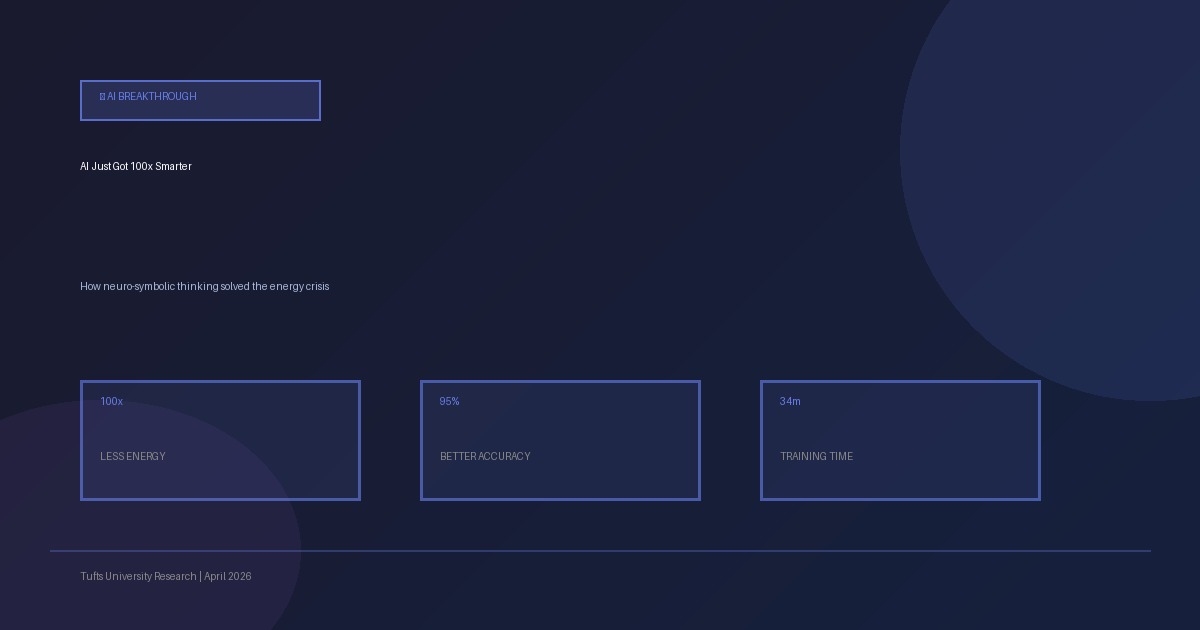

Then, in April 2026, a team at Tufts University did something remarkable. They figured out how to teach AI to think more like a human—by actually using logic instead of just pattern-matching. And here’s the wild part: the system used 100 times less energy and got smarter in the process.

Here’s How They Did It

For years, we’ve been obsessed with one idea: make the AI bigger, feed it more data, and it’ll get better. This worked for ChatGPT and Claude and all these massive language models. But robots are different.

When a robot is trying to pick up objects and stack them, or navigate through a room, or understand what you’re asking it to do, it’s not just recognizing patterns. It’s solving puzzles. It’s reasoning through steps. It’s kind of like how you solve a problem—you break it into pieces, think through the logic, and figure it out.

The researchers realized: “Wait… what if we let the robot think that way instead of forcing it to brute-force everything?”

They took a robot that normally uses massive neural networks and said: “Let’s add some real logic to this.” They combined symbolic AI—basically telling the robot explicit rules about how things work—with neural networks that learn from examples.

So now you’ve got a system that says: “Here’s what I learned from a billion examples, AND here are the logical rules I know about how the world works. Let me use both to make a decision.”

The results were stunning.

The Numbers

- 100x less energy - The system went from using as much power as a house to using as much power as a laptop

- Better accuracy - Ironically, using LESS computing power made it MORE accurate, not less

- Faster training - Models that took weeks to train now took hours

- Real-world improvement - Robots could solve real problems way faster

Imagine if every AI system out there cut its energy use by 100x. The electricity grid wouldn’t be overloaded. Data centers could cool themselves. The carbon footprint of AI would shrink dramatically.

Why Hasn’t Anyone Done This Before?

This sounds obvious, right? Why not just combine neural networks with logic?

It’s actually been a huge battle in AI. For the last decade, the deep learning crowd (big neural networks) and the symbolic AI crowd (logic-based systems) have been kind of… not talking to each other. Deep learning won because it scaled. You could throw more data at it and it would get better. But there were always problems it struggled with—especially problems that required real reasoning.

Symbolic AI could do logic perfectly but couldn’t handle real-world messiness.

The Tufts team realized: you don’t have to pick one. You can use both. And when you do, something magical happens.

What Changes Now

This isn’t just a neat lab result. This is the kind of breakthrough that rewires how people build AI.

We’re already seeing companies starting to integrate these hybrid approaches. Microsoft has invested in neuro-symbolic AI research. OpenAI has been quietly experimenting with it. Google has papers on it.

If this approach scales the way it looks like it will, we could see:

- Way less energy usage - which means AI becomes cheaper and more accessible

- Better reasoning - AI systems that actually understand WHY they’re making decisions

- Safer AI - when you add logical constraints, you can actually verify that an AI won’t do something dangerous

- Smaller models - you don’t need billion-parameter models to solve problems

The Bigger Picture

This is a reminder that the future of AI probably isn’t just “keep making bigger neural networks.” It’s about combining different approaches into systems that are smarter and more efficient.

The energy crisis in AI isn’t something that needs a newer, fancier chip. It needs smarter engineering. And the Tufts team just showed us what that looks like.

The robots are getting smarter. And they’re finally learning to think instead of just brute-forcing their way through problems.

Sources:

- Tufts University research on Neuro-Symbolic AI

- Nature Machine Intelligence publications

- IEEE Spectrum coverage of AI energy consumption

Further Reading:

- How Neuro-Symbolic AI Is Reshaping Machine Learning

- The Future of Hybrid AI Systems

- Energy Efficient Deep Learning Research